This two-part blog series by 3ie Senior Fellow Michael Bamberger underscores the need for and challenges to designing ‘complexity-responsive’ evaluations. In this second part, Bamberger recommends a practical five-step approach to address complexity-responsive evaluation in a systematic way.

Ignoring complexity can lead to over-estimating the real-world impacts of development interventions. The first blog of this series explained how program implementation and outcomes are affected by multiple interacting factors. For evaluations to be complexity-responsive, it is imperative to find a balance between the theoretical difficulties of evaluating complex problems (Birhane 2021; and Byrne and Callaghan 2014) and the need to provide practical guidance to policymakers on how to address complexity in the real-world. The five-step approach described in this blog aims to provide an operationally useful solution to these challenges.

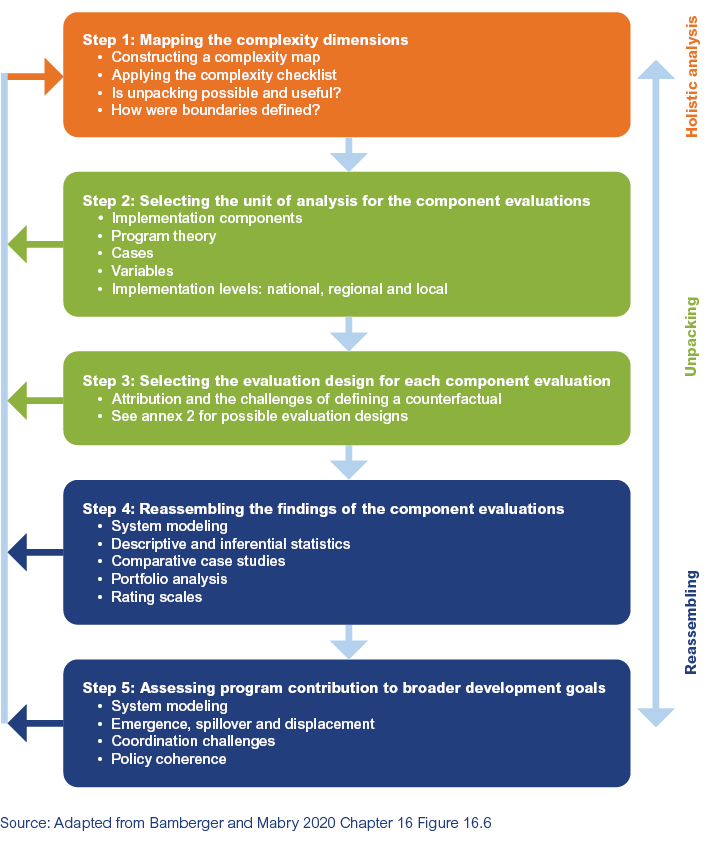

Step 1: Mapping the complexity dimensions

Complexity-responsive evaluations can be used, with appropriate adjustments, at the level of projects, programs, large-scale sector interventions, national and international initiatives or at the policy level. For simplification, we will refer to all of these as programs. The analysis begins by using systems analysis (Williams and Hummmelbrunner 2011) and other appropriate tools to map the program context and to assess the complexity level of the four dimensions as detailed in the previous blog. A complexity checklist is then applied to rate the level of complexity on each dimension. In addition to providing stakeholders with an understanding of what complexity means for their program, the checklist also helps decide whether the program is sufficiently complex to merit the additional investment of time and resources required to conduct a complexity-focused evaluation.

Step 2: Breaking the project into evaluable components and identifying the units of analysis for the component evaluation

It is extremely difficult to conduct a holistic evaluation of a complex program and most conventional evaluation tools do not systematically address complexity, if at all. So, the program is unpacked into a set of components, each of which can be separately evaluated using conventional evaluation methods. Annex 3 gives an example of the different kinds of components that could be used for evaluating a program to support the decentralization of transportation services.

Although it is possible to combine more than one type of analysis, this is usually not done due to cost and time. However, if resources permit, it is useful to combine a few in-depth case studies (Yin 2008 and 2012) with any of the other units of analysis. These case studies are one of the tools used in process analysis to provide a reality test comparing what actually happens on the ground with what is reported in program documents. It is always important to ensure that the selected units for the analysis address key elements of the program and are sufficiently representative to support generalization to the whole program.

Step 3: Selecting the evaluation designs for assessing the components

The component evaluation of units of analysis can use any of the conventional quantitative, qualitative, mixed methods evaluation designs (see annex 2), and the choice will depend on: the nature of the program and the components being evaluated, data availability, time and resources and to some extent client preferences. An exciting recent development concerns the rapid growth of big data and data analytic tools, which offer important benefits for the evaluation of complexity programs (see annex 4). For more on big data approaches, see 3ie’s other blogs on the topic here and here, and Bamberger and Mabry 2030 Chapter 18.

Big data also opens up the possibility of applying systems analysis to permit more sophisticated kinds of analysis (see below).

Step 3 is where most conventional evaluations end and if each individual component is rated positively it is typically assumed that the project has achieved its objectives. However, the present approach includes two additional steps for the following reasons:

- The three-step design ignores how project implementation and outcomes can be significantly affected by external factors (e.g. political, organizational, legal, socio-cultural, demographic, climatic).

- Many evaluations implicitly assume that coordination among different agencies works as planned and that all of them fully support the program, and its objectives. However, this is rarely the case due to different priorities, methods of operation and levels of interest in supporting the program.

- There are many programs where each component is rated positively but where there is no measurable impact on the broader program objectives. This is often due to design failures, particularly when the scale of the intervention is not sufficient to impact the underlying causes of the problems being addressed.

Figure 1: The five-step unpacking and reassembling approach to evaluating complex programs

Step 4: Reassembling the findings of the individual component evaluations to assess overall program outcomes

The fourth step involves the challenging task of reassembling the findings of the individual component evaluations to assess how final outcomes are influenced by the complexity dimensions identified in Step 1. There is currently no standard approach, but some of the possible approaches include:

- Linking different components of a program using systems analysis (see annex 5)

- Overviewing different components of a large, multi-level program using portfolio analysis (see annex 6).

- Using comparative case studies to compare configurations of household or group attributes that are necessary to achieve a particular program outcome, or that inhibit the achievement of an outcome.

- Linking and aggregating descriptive and inferential statistics.

- Using rating scales to value different dimensions of performance.

Step 5: Assessing the program contribution to broader development goals

Many programs are intended to contribute to broader development objectives such as the Sustainable Development Goals (SDGs) or national development objectives. For the assessment of these broader goals, questions such as the following can be addressed

- Identifying broad issues and challenges: Using the complexity map, combined with elements of systems analysis that may be available, to assess program contribution to broader development goals

- Emergence, spill-over and displacement: Programs and the context in which they operate change, and it is important both to assess how this affects the interventions and outcomes and how well the program design can adapt. The scope or boundaries of who is affected (positively and negatively) can also change over time, so spill-over and displacement must be assessed (Bamberger, Vaessen and Raimondo 2016 Chapter 7).

- Coordination challenges: As program goals and scope become more broad and complex, the coordination challenges also become difficult to assess. The systems analysis tools discussed earlier become important in view of this.

- Policy coherence: Many development objectives are implemented over long periods of time and as they must often respond to international agreements as well as national development strategies, there is a danger that these policies may become fragmented. Consequently, it is important to assess policy coherence .

Conclusion

The failure to incorporate a complexity-focus is one of the most serious limitations of most development evaluations. The failure is partly due to a certain conservatism among both the agencies that commission evaluations and the evaluation community to continue requesting and using traditional evaluation approaches that do not incorporate complexity. It is also due to the fact that the evaluator’s toolbox includes very few methodologies that can address complexity.

There are a number of reasons why the pressures to incorporate complexity and the ability to do so are likely to increase. On the demand side, both the international community and governments are becoming increasingly concerned about global challenges such as climate change and humanitarian issues, all of which require complexity-responsive evaluation designs. On the supply side, there is a growing theoretical and methodological literature on complexity which is gradually developing new research tools. However, the real game-changer is the rapid expansion of big data and data analytics, which are providing affordable, accessible and methodologically robust tools for assessing complexity. So, the challenge for evaluators is to become familiar with these new tools and to build the kinds of collaboration and organizational structures to develop, test and apply these tools as a standard part of the evaluator’s toolkit.

This is the second blog in a new series by 3ie's Senior Fellows. Experts in their fields, our fellows support our work in a variety of ways. Visit the Senior Fellows Program page to learn more.

Comments

Practical question: How does Step 1 "Mapping the complexity dimensions", especially the use of the checklist, enable Step2: "Breaking the project into evaluable components and identifying the units of analysis for the component evaluation "

Rick, though to be fair, an elephant delivering a mouse would be INCREDIBLE. It would be a miracle. Not a bad outcome at all.

(And as a small point that maybe you won't be aware of, but the word you used to describe Inuit people in your comment is considered a really horrible racial slur in the circumpolar regions.)

I found the article very informative and helpful in conceptualizing complexity and explaining/ applying. I am curious on how we could determine scale/project size more relationally to make complexity awareness not a preserve of ' large' WB projects given that complexity is everywhere.

I very much agree with this comment.

Follow-up to the comments received to date (July 28)

The point about the need to capture the perspectives of different groups on complexity (and other issues) is very important. This is related to complexity theory's work on boundary definitions and critical systems heuristics. What one group may consider as complex (or important) may be considered by another to be relatively simple or unimportant.

This is also related to Justus' point off ensuring that issues such as complexity or not just consider the province of large funding agencies and development banks. It is my impression that many NGOs revery interested in complexity as they may be more aware than donors about the need to fully understand the context in which programs are designed and implemented.

Concerning Rick's question about how Step 1 of the complexity evaluation framework help select the units of analysis for Step 2? Step 1, combining the complexity map with the checklist, can help identify the dimensions and sub-dimensions that have the highest complexity scores. The application of different systems analysis tools can also help complexities and problems of coordination among the different stakeholders. Both of these can help identify some of the potentially important units of analysis for step 2. For example, if many areas of the intervention being studied have very low levels of complexity, perhaps they may be considered less important to study in Steps 2 and 3. Of course, this is not the only factor to take into consideration when selecting the units of analysis, but these factors will certainly be one of the criteria for selecting the units of analysis.

Thank you for your helpful comments, and as I mentioned in responding to questions on the earlier post, this complexity framework is still a work in progress.

Re names used for people "in the circumpolar regions", this reflected my ignorance, rather than ill intent..

This is really interesting and useful!

I would like to ask permission to translate (in French) the complexity checklist [https://www.3ieimpact.org/sites/default/files/2021-07/complexity-blg-An…].

I'd love to present this to as a resource in an evaluation training program by ENAP, a public university specialised in public administration. [https://www.globalevaluationinitiative.org/events/core-program-programm…]

Thank you.

As I have said before, complexity theory sometimes seems a bit like an elephant that has laboured long and hard only to deliver a mouse. And this statement is coming from someone with a fairly long standing interest in the topic (Davies, 2004, 2005). This wariness rearises when I see a checklist for measuring complexity. For two reasons. One is that a lot of effort has gone into the issue of complexity measurement over the years (See Melanie Michell, 2009:94-114), but I can’t see any use of that thinking here (the fault could lie in either direction, the source or the prospective user). Secondly, complexity is very much “in the eye of the beholder”, i.e. depending on what you are looking for. A single cell life form may not be very complex from your or my day-to-day perspective, but there are now people researching the role of quantum level events within single cells (Radio 4 this am) – that sounded very complex.

If we take this latter reason seriously then perhaps we should take a more ethnographic perspective on complexity – we should look to find where people are seeing complexity and where they are not, and the implications thereof. Eskimos famously have many different ways of describing snow and ice, and for very good reasons it would seem. In today’s “New Scientist” the same species of fish have been found to develop more complex brain structures when they are trying to survive in more complex environments, versus less complex, …where complexity is measured in relatively simple terms of biodiversity. When it comes to development aid programmes, we should pay attention to where the programme managers are developed detailed versus less detailed forms of knowledge, the reasons why so and the consequences. Developing detailed knowledge takes time and has a cost, so it can’t happen on all fronts. And not only how and where they differentiate the world in more detail, but also the differences in frequency with which that knowledge is updated in various areas