The COVID-19 pandemic has created new hype around the potential for new, ‘big’, data sources to revolutionize data collection, and change the landscape of evidence for policy. Non-traditional data sources - like satellite imagery, call detail records, and social media posts—do offer exciting opportunities to track and understand processes on Earth across space and time like never possible before. Importantly, these technologies make it possible to collect detailed data without ‘boots on the ground’, which has proved particularly helpful in the face of global lockdowns.

Given all these advantages, should we expect big data to replace traditional surveys going forward? We don’t think so. Although these new technologies have great potential, they can't answer every type of question, they raise data transparency questions, and they often require some validation via more old-school methods (e.g. ground surveys). Even as we adjust to a new normal, post COVID, surveys will continue to play an important role for policy evaluation. Here’s why.

First, not all technologies fit all purposes. For example, for various impact evaluation applications, free and open source remotely sensed imagery will simply not be high enough resolution to be useful. Some of the most promising applications: counting number of trees, detecting specific types of crop fields, or even counting the number of buildings or structures in a given village – require a higher resolution than is currently freely available. There are also crucial indicators that even high-resolution satellite data cannot measure, like employee productivity or people’s well-being or satisfaction. Other challenges are more technical: atmospheric conditions, cloud coverage and sensor limitations can all limit the information in a satellite image.

Second, there are important issues with bias and coverage in big data sources. From the world of surveys, many of us are used to working with representative samples, but big data sources are very different. The type of bias varies by source and the directionality is not always clear.

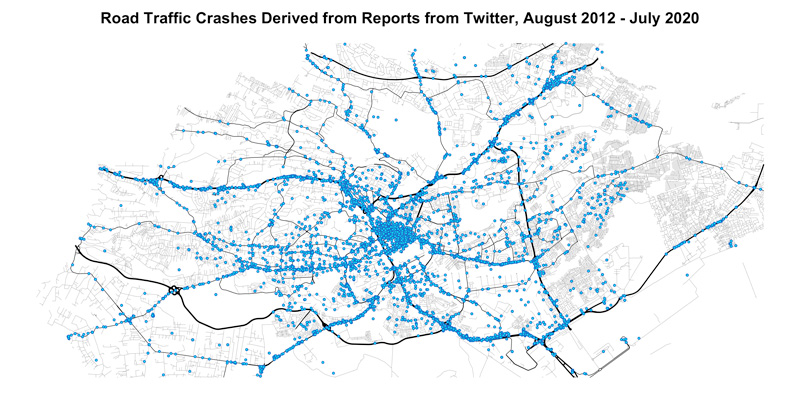

One example of bias comes from a project in Nairobi, where a World Bank Development Impact Evaluation research team is working with the government to improve road safety by identifying ‘hotspots’ for traffic accidents. Two data sources, administrative data on traffic accidents from the national police and accident reports scraped from twitter, tell very different stories about where and when traffic accidents take place across the city. This example shows how comparing data sources, and integrating them with as much additional information as possible, is very important to understand their accuracy and coverage.

Issues with algorithm training or data interpretation can also induce bias, as is true more generally with machine learning and artificial intelligence. After all, it is people who develop these algorithms or provide the data to train the machine. It is often a challenge to design a robust enough algorithm that it can be generalized across space and time, and not apply only to a specific limited area. Survey data or another source of "training data" is often necessary to ground truth algorithms used to analyze telecom or satellite data.

Third, there are potentially major data quality and transparency issues. The dramatically greater volume, variety and velocity of data and complexity of analytical methods makes it much harder to manage, analyze and share data and methods. Replicability is a significant concern. For example, Lancet and the New England Journal of Medicine recently retracted studies on the use of hydroxychloroquine for treating COVID-19 because of such an issue. The large database on which the studies were based, developed using machine learning with medical records from 671 hospitals on six continents, was from a private company which would not release adequate replication data. The data ultimately couldn’t be validated.

All of the above is not to say big data does not have an important role to play. Quite the contrary! We want to emphasize that uses of new remote-sensing data should focus on those aspects of impact evaluations where it is most likely to add value. One illustrative example is where satellite or airborne imagery of agricultural fields are likely to be more available across space and time, accurate, unbiased, affordable, and faster for measuring crop yields than self-reports or direct observation. Other promising applications abound.

New applications for remote sensing and big data will continue to emerge, and new sources of remotely sensed data will become accessible. As this happens, the impact evaluation community – and the international development community more broadly – should embrace the opportunities to advance the field while also considering the limitations that can easily get lost amid the enthusiasm of new technologies.

Learn more about exciting work in this space being done by CEGA, NLT, 3ie, and the World Bank: The Geospatial Analysis for Development (Geo4Dev) Initiative is a hub for research and training that exploits geospatial data for the targeting, design, and evaluation of social and economic development programs, especially in low- and middle-income countries. Through the initiative, the Center for Effective Global Action (CEGA), New Light Technologies (NLT), the International Initiative for Impact Evaluation (3ie), and the World Bank are driving the development of new data, tools and methods for conducting geospatial analysis across diverse sectors including agriculture and food security, urbanization, climate change, impact evaluation, humanitarian crisis, and disaster response. Geo4Dev brings together a network of leading researchers, as well as government ministries, NGOs, private enterprises, and funding partners to inspire and support new research collaborations, share knowledge, and build capacity to utilize geospatial data, tools, and approaches.

You can learn more about 3ie’s work in this area on our website.

Comments

Great insights in this blog post, thanks for highlighting the continued need for 'traditional' field surveys. It would be good to mention that there exists 'bridging' technologies that can improve the efficiencies of data collection through digitization of ground surveys. For example, mobile/tablet based platforms such as ODK (https://getodk.org/) or optical character recognition systems that can digitize data collected from paper forms (https://qed.ai/scanform/). It's also important to note that Big Data and traditional field surveys are not mutually exclusive, rather, there are impactful synergies from strategically integrating the two approaches together. This was highlighted at the recent convention of the Big Data Platform of the CGIAR https://bigdata.cgiar.org/blog-post/2020-convention-session-big-data-to…

This is enlightening to read. Limitations or bias related to the big data source should be well thought of before hand. In a lot of situations, both traditional and big data will/should be used to answer research questions.

I enjoyed the blog. Big data also does not, usually, permit an analysis of multiple conditions related to the same person (do they lack good nutrition and safe environmental conditions). For those working on multidimensional measurement, we are realising that surveys will be required for some time to come, although increasingly they can and should be augmented and supplemented by geospatial data.