As we mark 3ie’s 15 years, it’s an opportune time to take stock of the “state of the evidence”, using one of our major contributions to the field of evidence use: the Development Evidence Portal (DEP). The Portal makes evidence about the effectiveness of development interventions accessible to a large constituency of evidence users, funders, and decision-makers across the globe. Its user-friendly interface and advanced search and filtering options make scanning through more than 11,000 impact evaluations and 1,000 systematic reviews easy and time-efficient. As the world’s largest repository of this type of evidence, the DEP also provides a wealth of data about the evidence base for decision-making in development. In a new blog series, we’ll be sharing glimpses of what DEP data can tell us about this evidence base.

One of our favourite ways to glean insights from the DEP is to get a sense of where there is evidence and where there isn’t. The “where” can be conceptual—e.g., which sectors are well studied? Which outcomes aren’t being measured enough?—or literal, as in: which regions and countries are sites of lots of impact evaluations, and where are the “evidence deserts”?

So, our first few blogs in this series will be looking at the distribution of evidence in different regions. Each post will highlight a particular region and talk about the volume of available evidence and any interesting patterns in the country-level breakdown, the types of interventions or outcomes that tend to be studied in the region, the evaluation methods used, and so on.

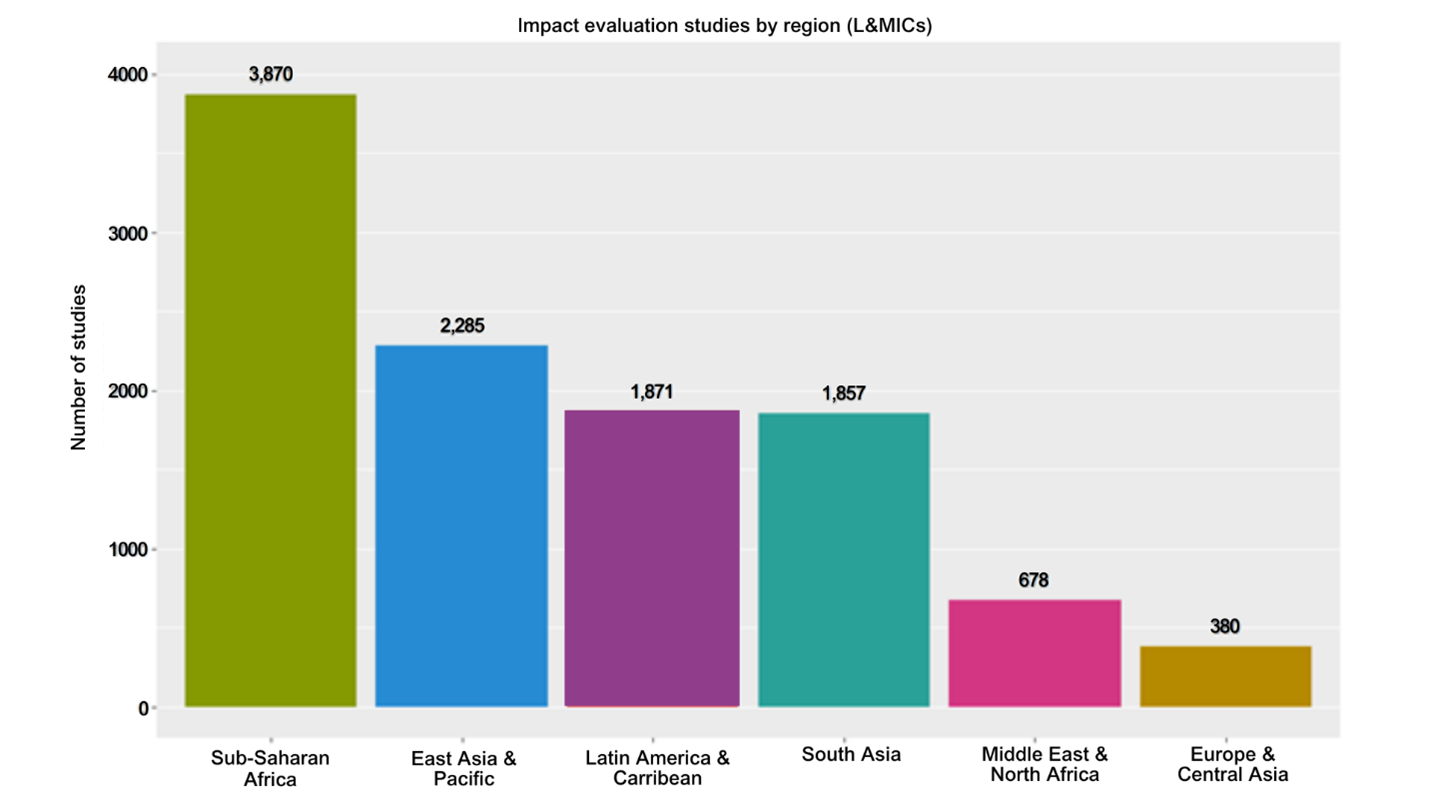

As a preview, the chart below shows the number of impact evaluations conducted in each region. The focus of impact evaluation research on Sub-Saharan Africa makes sense given that most of the world’s poorest countries are located there, so arguably this is where evidence is most needed.

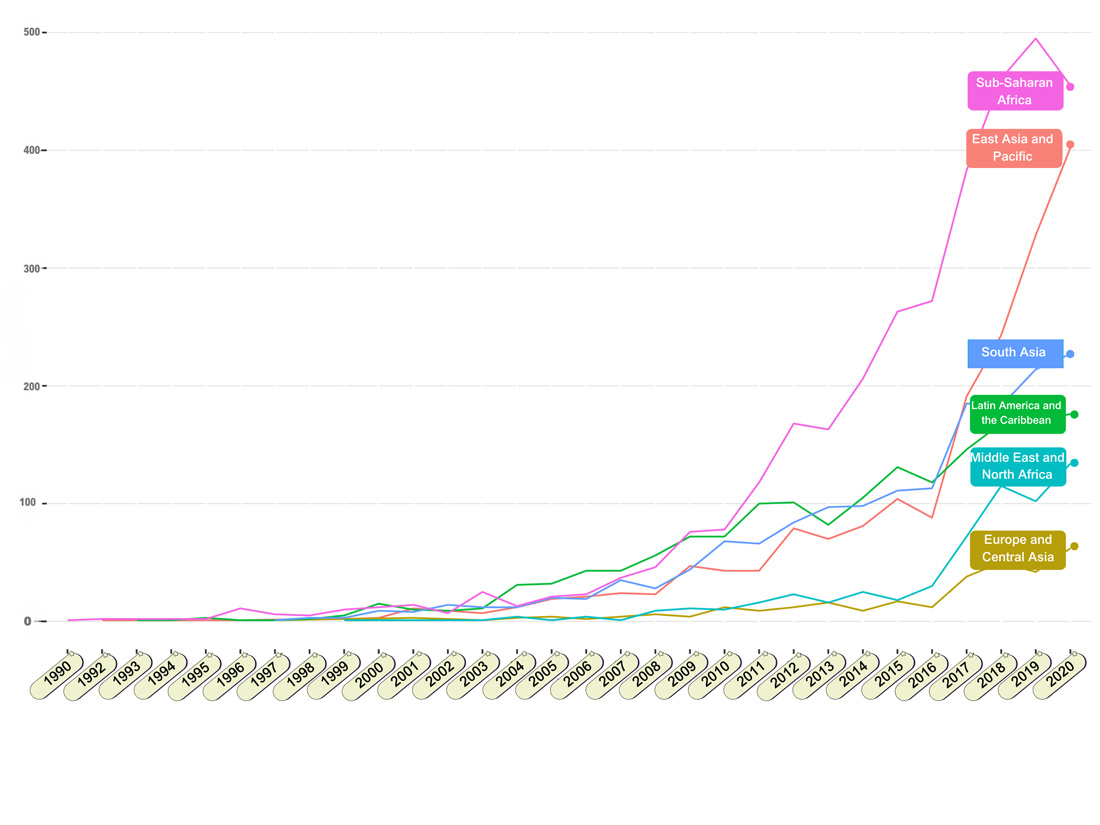

But the raw number of evaluations per region masks some interesting trends in the evolution of the evidence base over time. Looking at the chart below, we see that Latin America jumped to an early lead, consistent with lore that the modern age of impact assessment in development began with evaluation of large social welfare programmes in Latin America (e.g., Progresa/Oportunidades in Mexico and Bolsa Familia in Brazil). The production of IEs in Latin America continued to grow at a moderate pace, but attention to Sub-Saharan Africa (SSA) revved up in the late aughts, and the number of evaluations per year in SSA has increased steadily since. The fact that East Asia now occupies the number two slot in total evaluations is due to a very rapid increase in the number of evaluations in the last few years—but more on that when we do our deep dive on that region.

Watch this space as we bring you some insight into where the evidence is. Our plan is to focus most of the upcoming “Insights from DEP” blogs on regional deep-dives, but we also plan to look at other things the DEP data can tell us, like the distribution of evidence by thematic areas, the top funders of impact evaluations and systematic reviews, and the attention (or not) of the research to considerations of gender and equity. If you have suggestions for other aspects of the evidence we can look at using the DEP, please let us know!

Read the other 'state of the evidence' blog in the series:

Unveiling trends in impact evaluations across Sub-Saharan Africa

Unveiling trends in impact evaluations across Latin America and the Caribbean

Insights from the Development Evidence Portal – the Middle East and North Africa