When we launched our blog series about ethics in social science research, we noted that we want to ask ourselves the right questions at the right times, even when there are no easy answers. We know research teams face many ethical questions as they design studies, and our series of blogs has only discussed a few issues so far: Does a state of scarcity or equipoise make it ethical to withhold an intervention from a control group? When and how should we pay research participants? But researchers must navigate many more decisions related to ethical conduct and face barriers when doing so.

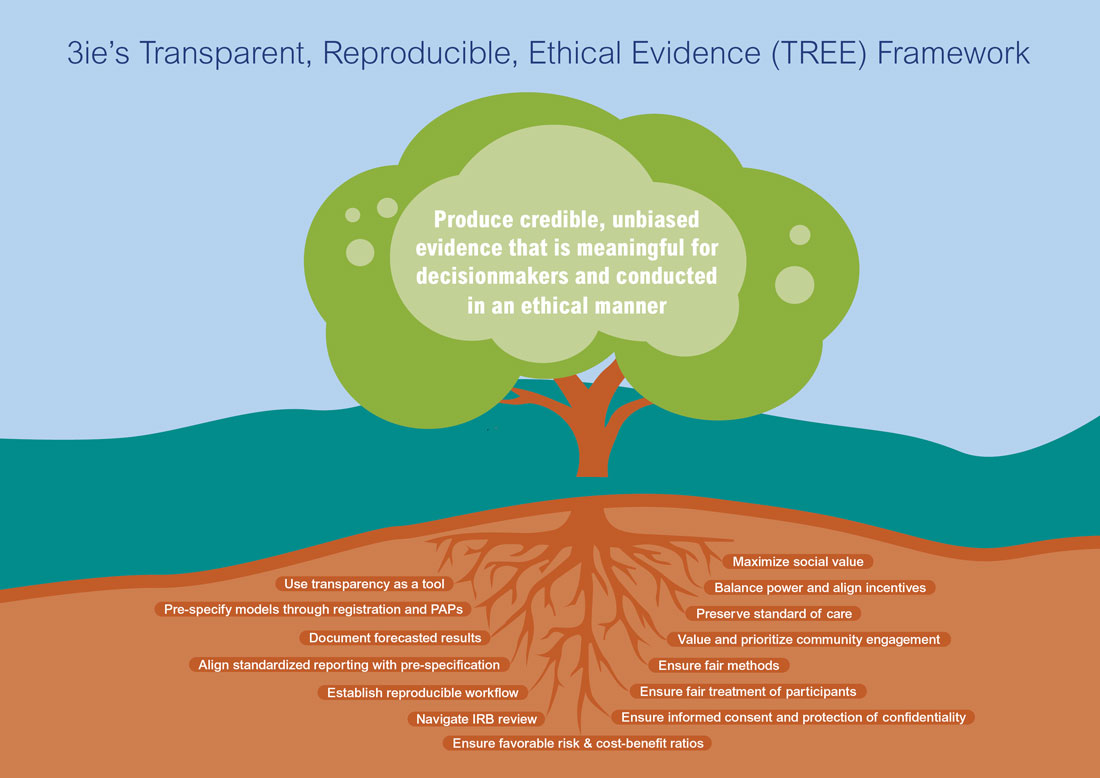

At 3ie, we are refining a process to help research teams consider the ethics questions raised in social science research and document their decisions. Our Transparent, Reproducible, and Ethical Evidence (TREE) Review Framework complements the necessary work of Institutional Review Board (IRBs) while ensuring we do not outsource our ethical judgement to them, we update our ethics review system to respond to changing needs of 21st century, and we continue to foster scientific integrity. The objectives of the TREE Review Framework within 3ie are to: (i) establish ethical standards, (ii) better integrate TREE best practices into research workflows; and (iii) establish timely, independent oversight of risks facing the research team to produce credible, meaningful, ethical evidence.

Why do we think this TREE Review Framework is important? Our community makes a moral argument that motivates investments in rigorous evaluations of policies and interventions: More evidence is better than less evidence in decision-making. We see the social value of this type of evidence generation when it informs better outcomes and when it challenges our existing beliefs about what works. However, there are three key risks facing this argument:

- Risks to credibility of evidence. Designing and implementing rigorous research is fraught with risks, including studying solutions that do not align with problems, survey design errors, and poor data quality. In addition, failures to replicate study results across large bodies of evidence have called into question the credibility of published research findings. This has drawn attention to additional risks to research credibility and waste, including perverse incentives for researchers to selectively report findings, p-hack analysis or use other researcher degrees of freedom to reach a particular finding. In addition, a lack of access to data and code can prevent independent verification of findings. And it remains unknown how prevalent outright fraud and data manipulation is, though this recent example highlights this risk.

- Risks to usability of evidence. Decision-makers face internal and external constraints which block evidence use. Internal constraints include individual biases, such as overconfidence and availability bias, which limit decision-makers’ use of evidence to update or course correct decisions otherwise based on intuition and experience. External constraints can include poor timing, availability, and relevance of the evidence, as well as political pressures to make certain decisions regardless of evidence.

- Risks to research participants and staff. Evidence generation often relies on collecting personally identifiable information and sensitive data on human subjects, including financial data, employment data, or biomarkers. These human subjects can have a range of vulnerabilities and liability to harm. Vulnerabilities can affect a participant’s autonomy and their ability to choose or not to participate in research. In addition to vulnerability, human subjects can face liability to harm. Risks of harm can include worse outcomes resulting from the studied intervention; feelings of exploitation, research fatigue, or re-traumatization during long surveys; or a loss of confidentiality. In some cases, the data collected on human subjects may be used for harm if placed in the wrong hands.

The good news is that the social science research community has many tools and best practices to address these risks. The open science movement has elevated best practices in transparency and reproducibility to improve research credibility and there are many movements around responsible data management. Practices include pre-specification through registration and pre-analysis plans, forecasting of results, use of standardized reporting and alignment with pre-specification, and de-identified data and code sharing. Evidence gap maps and community engagement can strengthen research questions and priorities, while more transparency in individual studies can inform evidence synthesis products, such as systematic reviews. At 3ie, we are also establishing stronger tools and metrics for measuring use of evidence. Ethical principles – beneficence, respect for persons, and justice – underly regulations that support IRBs and research teams as they navigate ethical dilemmas. And research organizations are turning their attention to how we ensure more ethical conduct of research, such as ensuring participant dignity.

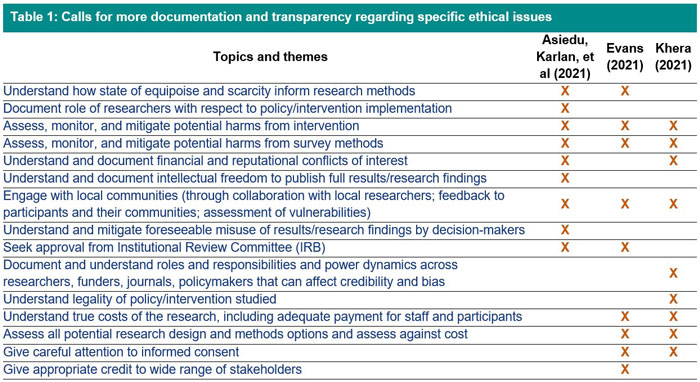

But gaps remain, and we hope our 3ie TREE Review Framework can help fill them and pull together consideration of all ethical risks under one process. First, when it comes to ethical best practices in our research, we do not yet share a set of ethical standards. To illustrate this point, Table 1 examines the key topics raised across three papers published in early 2021 that called on the international development community to document, assess, reflect, and share more regarding our ethical decision-making in randomized control trials. Across these three papers there were common themes, though with different language used, and each paper presented unique, but equally important issues. We think these arguments point to a need to establish standards around ethical practices.

[Asiedu, Karlan, et al (2021), Evans (2021), Khera (2021)]

Second, there are gaps in the existing IRB process, particularly for low and middle-income countries (LMICs). In addition to challenges related to the wide variation between different IRB reviews, IRBs do not yet consistently review research protocols for best practices in transparency and reproducibility, though there are efforts to promote this. So, even with a full IRB review, a research team does not receive a full review of all the practices they are establishing for ethical conduct of research.

Third, it is one thing to define best practices in a top-down policy, such as the 3ie TREE Policy, and it is another to implement these practices. We have found that research teams – within 3ie and among 3ie grantees – require additional technical support to integrate new practices and a broader understanding of ethical conduct into their research workflows. This process requires these practices be built into the budgets and design stages of projects, and that technical support is available to research teams.

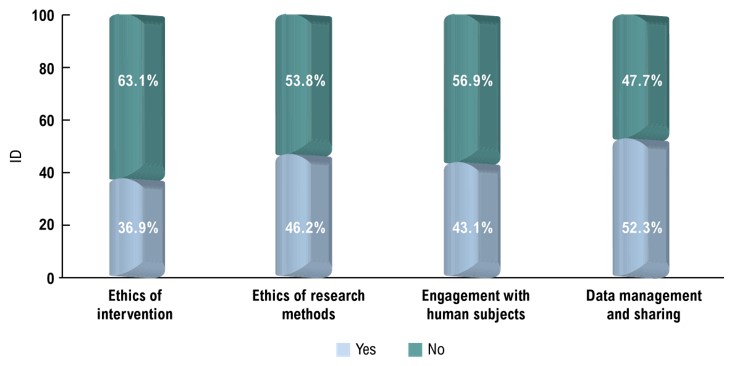

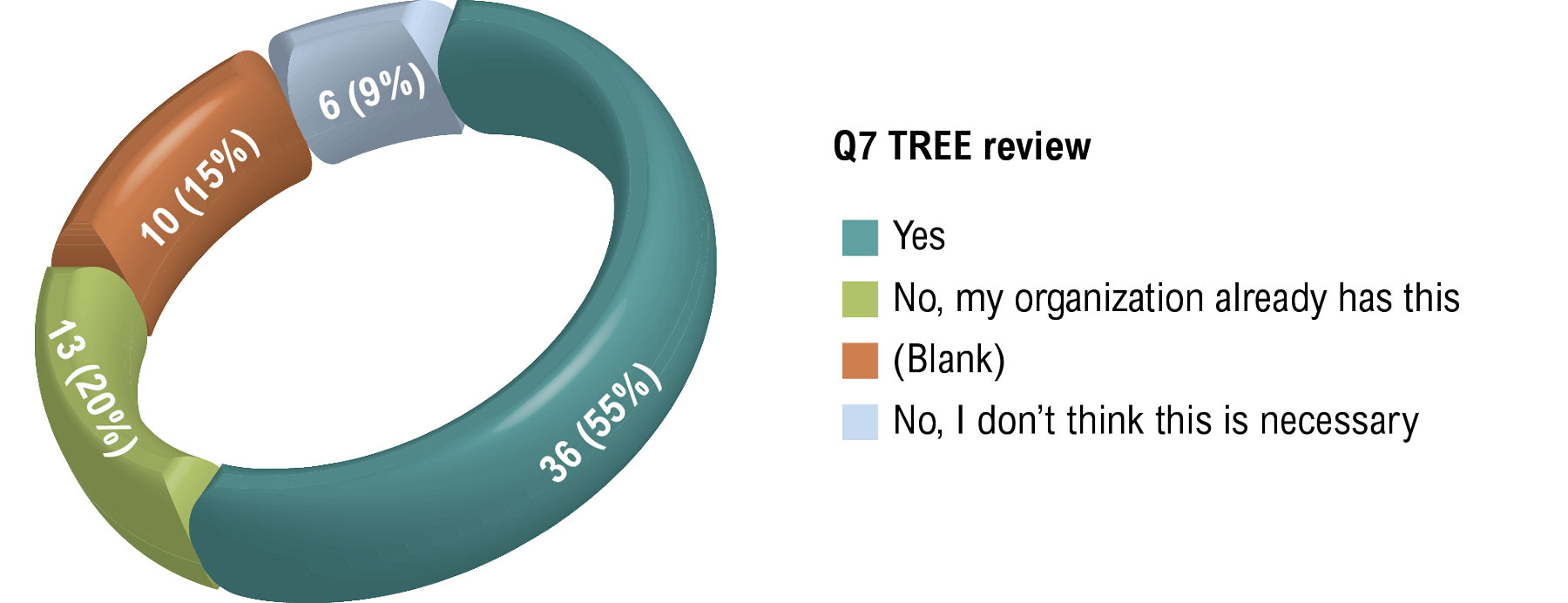

We are not alone in thinking about these issues. In late 2021, 3ie sent out a questionnaire regarding interest in TREE topics. Out of 65 respondents across research producers, consumers, and funders, more than half reported concerns regarding ethical data practices and nearly half had concerns with the ethics of the interventions the research community studies, research methods, and engagement with human subjects. Fifty-five percent of respondents replied they would be interested in learning more about a review process that examines risk through the lens of transparency, reproducibility, and ethics.

In terms of ethics, more respondents shared concerns regarding data management and sharing, and the ethics of research methods, and more than 35% had concerns regarding the ethics of the intervention and engagement with human subjects.

What have we done to develop this TREE Review Framework? We are relying on work to translate clinical research ethics standards for public policy and other relevant social science research and efforts to reimagine ethical oversight for human research protections. This effort has helped us shape 10 ethical standards to guide the TREE Review Framework within 3ie:

- Use transparency as a tool

- Maximize social value and meaningful use

- Balance power and align incentives

- Preserve standard of care

- Value and prioritize community engagement

- Use fair methods

- Ensure fair treatment of participants

- Ensure appropriate informed consent and protection of confidentiality

- Ensure favorable risk-benefit ratio for participants, bystanders, and staff

- Ensure favorable cost-benefit ratio

These standards underpin the TREE Review Framework's three components:

- TREE Questionnaire: We have developed a Questionnaire to define the questions we should ask ourselves and provide space to document the answers – particularly as they evolve over the research life cycle.

- Milestones: We recommend the research team consult the Questionnaire and document their responses at multiple points in the research life cycle: (i) prior to funding decision to ensure funding request includes budget for all related best practices, (ii) prior to data collection (baseline, interim, final) to ensure protocols and practices align with requirements, and (iii) prior to final publication to facilitate a final ‘ethics appendix’.

- Independent support: We recommend that an independent committee assess the research team’s responses and provide feedback. Like study monitoring or data and security monitoring committees in clinical research, this independent committee can provide timely, unbiased assessment of the research team’s decision-making. Within 3ie, this support is established as a peer review function to provide independent technical support to research teams as they navigate complex ethical issues.

We are currently pilot testing the TREE Review Framework for internal 3ie teams. Once we have completed pilot testing, we aim to share our experience and tools with 3ie research grantees and other organizations interested in tackling similar challenges in the design, implementation, and dissemination of research. Stay tuned.

We welcome the community’s input and feedback into this proposed process and tool. Please reach out to tree@3ieimpact.org with any suggestions, comments, questions, or ideas!